Stanford College’s Institute for Human-Centered Synthetic Intelligence (HAI) has launched the seventh annual concern of its complete AI Index report, written by an interdisciplinary staff of educational and industrial specialists.

This version has extra content material than earlier editions, reflecting the fast evolution of AI and its rising significance in our on a regular basis lives. It examines all the pieces from which sectors use AI essentially the most to which nation is most nervous about dropping jobs to AI. However some of the salient takeaways from the report is AI’s efficiency when pitted towards people.

For those that have not been paying consideration, AI has already crushed us in a frankly stunning variety of vital benchmarks. In 2015, it surpassed us in picture classification, then primary studying comprehension (2017), visible reasoning (2020), and pure language inference (2021).

AI is getting so intelligent, so quick, that lots of the benchmarks used so far at the moment are out of date. Certainly, researchers on this space are scrambling to develop new, more difficult benchmarks. To place it merely, AIs are getting so good at passing assessments that now we want new assessments – to not measure competence, however to spotlight areas the place people and AIs are nonetheless completely different, and discover the place we nonetheless have a bonus.

It is price noting that the outcomes beneath mirror testing with these outdated, presumably out of date, benchmarks. However the general pattern remains to be crystal clear:

AI Index 2024

Have a look at these trajectories, particularly how the newest assessments are represented by a close-to-vertical line. And keep in mind, these machines are digital toddlers.

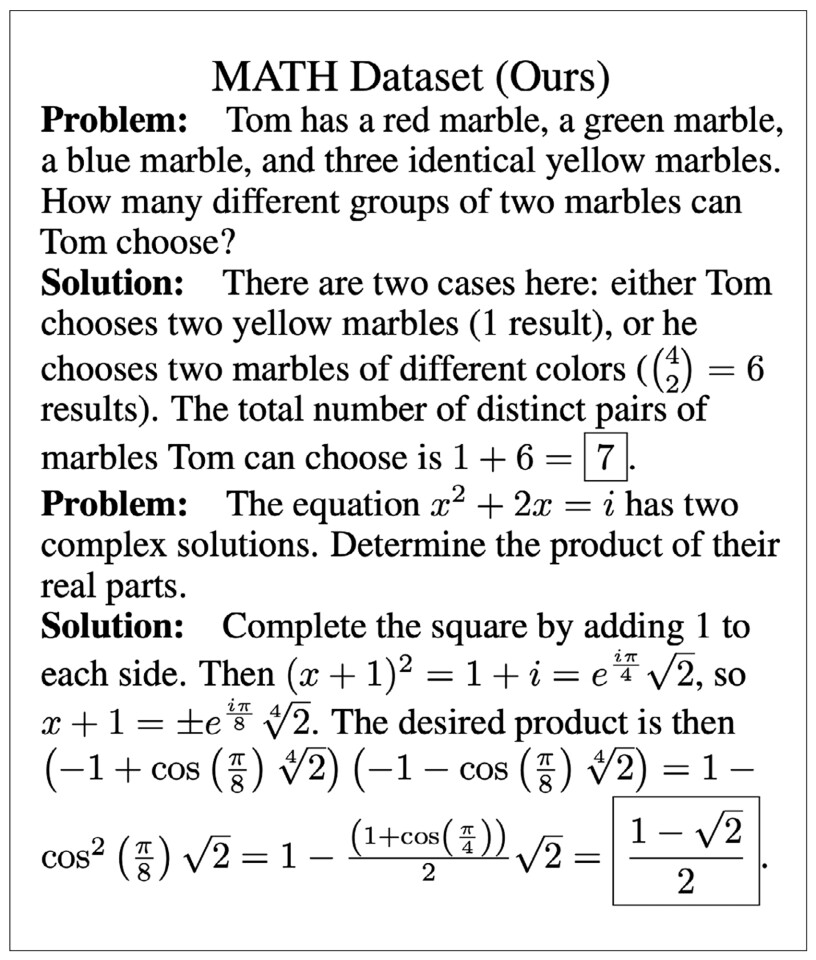

The brand new AI Index report notes that in 2023, AI nonetheless struggled with complicated cognitive duties like superior math problem-solving and visible commonsense reasoning. Nonetheless, ‘struggled’ right here is likely to be deceptive; it actually doesn’t suggest AI did badly.

Efficiency on MATH, a dataset of 12,500 difficult competition-level math issues, improved dramatically within the two years since its introduction. In 2021, AI programs may clear up solely 6.9% of issues. Against this, in 2023, a GPT-4-based mannequin solved 84.3%. The human baseline is 90%.

And we’re not speaking concerning the common human right here; we’re speaking concerning the sorts of people that may clear up check questions like this:

Hendryks et al./AI Index 2024

That is the place issues are at with superior math in 2024, and we’re nonetheless very a lot on the daybreak of the AI period.

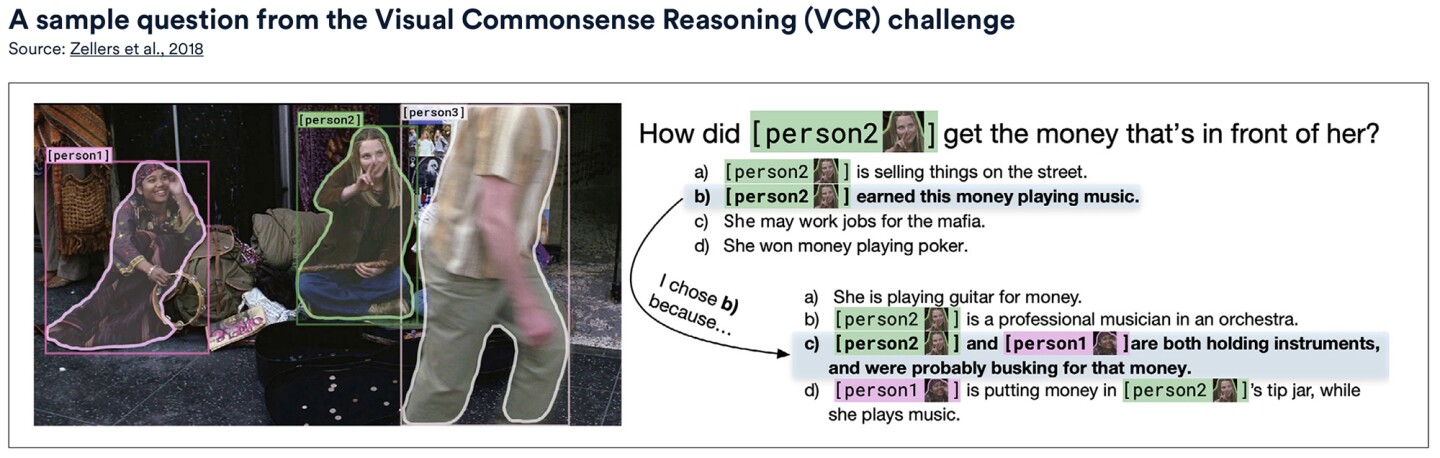

Then there’s visible commonsense reasoning (VCR). Past easy object recognition, VCR assesses how AI makes use of commonsense information in a visible context to make predictions. For instance, when proven a picture of a cat on a desk, an AI with VCR ought to predict that the cat would possibly soar off the desk or that the desk is sturdy sufficient to carry it, given its weight.

The report discovered that between 2022 and 2023, there was a 7.93% improve in VCR, as much as 81.60, the place the human baseline is 85.

Zellers et al./AI Index 2024

Forged your thoughts again, say, 5 years. Think about even considering about displaying a pc an image and anticipating it to ‘perceive’ the context sufficient to reply that query.

These days, AI generates written content material throughout many professions. However, regardless of quite a lot of progress, massive language fashions (LLMs) are nonetheless liable to ‘hallucinations,’ a really charitable time period pushed by firms like OpenAI, which roughly interprets to “presenting false or deceptive info as truth.”

Final 12 months, AI’s propensity for ‘hallucination’ was made embarrassingly plain for Steven Schwartz, a New York lawyer who used ChatGPT for authorized analysis and didn’t fact-check the outcomes. The choose listening to the case rapidly picked up on the authorized instances the AI had fabricated within the filed paperwork and fined Schwartz US$5,000 (AU$7,750) for his careless mistake. His story made worldwide information.

HaluEval was used as a benchmark for hallucinations. Testing confirmed that for a lot of LLMs, hallucination remains to be a major concern.

Truthfulness is one other factor generative AI struggles with. Within the new AI Index report, TruthfulQA was used as a benchmark to check the truthfulness of LLMs. Its 817 questions (about matters akin to well being, regulation, finance and politics) are designed to problem generally held misconceptions that we people usually get fallacious.

GPT-4, launched in early 2024, achieved the best efficiency on the benchmark with a rating of 0.59, nearly thrice greater than a GPT-2-based mannequin examined in 2021. Such an enchancment signifies that LLMs are progressively getting higher relating to giving truthful solutions.

What about AI-generated photos? To grasp the exponential enchancment in text-to-image era, take a look at Midjourney’s efforts at drawing Harry Potter since 2022:

Midjourney/AI Index 2024

That is 22 months’ price of AI progress. How lengthy would you count on it might take a human artist to succeed in an analogous stage?

Utilizing the Holistic Analysis of Textual content-to-Picture Fashions (HEIM), LLMs have been benchmarked for his or her text-to-image era capabilities throughout 12 key points essential to the “real-world deployment” of photos.

People evaluated the generated photos, discovering that no single mannequin excelled in all standards. For image-to-text alignment or how nicely the picture matched the enter textual content, OpenAI’s DALL-E 2 scored highest. The Steady Diffusion-based Dreamlike Photoreal mannequin was ranked highest on high quality (how photo-like), aesthetics (visible attraction), and originality.

Subsequent 12 months’s report goes to be bananas

You may word this AI Index Report cuts off on the finish of 2023 – which was a wildly tumultuous 12 months of AI acceleration and a hell of a experience. In reality, the one 12 months crazier than 2023 has been 2024, wherein we have seen – amongst different issues – the releases of cataclysmic developments like Suno, Sora, Google Genie, Claude 3, Channel 1, and Devin.

Every of those merchandise, and a number of other others, have the potential to flat-out revolutionize total industries. And over all of them looms the mysterious spectre of GPT-5, which threatens to be such a broad and all-encompassing mannequin that it may nicely eat all of the others.

that is essentially the most attention-grabbing 12 months in human historical past, apart from all future years

— Sam Altman (@sama) March 17, 2024

AI isn’t going wherever, that’s for positive. The fast price of technical growth seen all through 2023, evident on this report, reveals that AI will solely maintain evolving and shutting the hole between people and expertise.

We all know this can be a lot to digest, however there’s extra. The report additionally appears into the downsides of AI’s evolution and the way it’s affecting world public perceptions of its security, trustworthiness, and ethics. Keep tuned for the second a part of this sequence, within the coming days!

Supply: Stanford College HAI

/cdn.vox-cdn.com/uploads/chorus_asset/file/25408204/KV_US.jpeg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25588208/Megalopolis_Adam_Driver.png)