(Aleksey Nikiforov/Shutterstock)

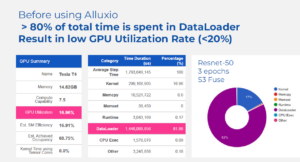

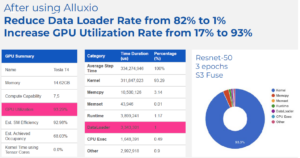

Prospects that use the high-speed cache within the new Alluxio Enterprise AI platform can squeeze as much as 4 occasions as a lot work out of their GPU setups than with out it, Alluxio introduced in the present day. Alluxio additionally says the general mannequin coaching pipeline, in the meantime, might be sped as much as 20x because of the information virtualization platform and its new DORA structure.

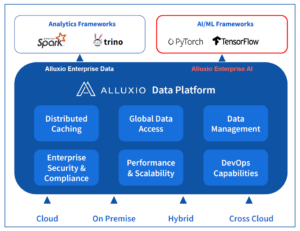

Alluxio is healthier identified within the superior analytics market than within the AI and machine studying (ML) market, which is a results of the place it debuted within the information stack. Initially developed as a sister challenge to Apache Spark by Haoyuan “HY” Li–who was additionally a co-creator of Spark at UC Berkeley’s AMPlab–Alluxio gained traction by serving as a distrbuted file system in fashionable hybrid cloud environments. The product’s actual forte was offering an abstraction layer to speed up I/O for information between storage repositories like HDFS, S3, MinIO, and ADLS and processing engines like Spark, Trino, Presto, and Hive.

By offering a single world namespace throughout hybrid cloud environments constructed across the precepts of separation of compute and storage, Alluxio reduces complexity, bolsters effectivity, and simplifies information administration for enterprise purchasers with sprawling information estates measuring within the tons of of petabytes.

As deep studying matured, the parents at Alluxio realized that a possibility existed to use its core IP to assist optimize the move of knowledge and compute for AI workloads too–predominantly laptop imaginative and prescient use circumstances but additionally some pure language processing (NLP) too.

The corporate noticed that a few of its current analytic clients had adjoining AI groups that had been struggling to develop their deep studying environments past a single GPU. To deal with the problem of coordinating information in multi-GPU environments, clients both purchased excessive efficiency storage home equipment to speed up the move of knowledge into GPUs from their main information lakes, or paid information engineers to jot down scripts for transferring information in a extra low-level and handbook style.

Alluxio noticed a possibility to make use of its know-how to do primarily the identical thing–accelerate the move of coaching information into the GPU–but with extra orhcestration and automaton round it. This gave rise to the creation of Alluxio Enterprise AI, which Alluxio unveiled in the present day.

The brand new product shares some know-how with its current product, which beforehand was referred to as Alluxio Enterprise and now has been rebranded as Alluxio Enterprise Information, however there are necessary variations too, says Adit Madan, the director of product administration at Alluxio.

“With Alluxio Enterprise AI, though a number of the performance sounds and may be very acquainted to what was there within the Alluxio, this can be a model new programs structure,” Madan tells Datanami. “This can be a fully decentralized structure that we’re naming DORA.”

With DORA, which is brief for Decentralized Object Repository Structure, Alluxio is, for the primary time, tapping into the underlying {hardware}, together with GPUs and NVMe drives, as an alternative of dwelling purely on the software program stage.

“We’re saying use Alluxio in your accelerated compute itself,” i.e. the GPU nodes, Madan says. “We’ll make use of NVME, the pool of NvME that you’ve got obtainable, and give you the I/O demand by merely pointing to your information lake with out the necessity for an additional excessive efficiency storage answer beneath.”

Moreover, Alluxio Enterprise AI leverages methods that Alluxio has used on the analytics aspect for a while to “make the I/O extra clever,” the corporate says. That features optimizing the cache for information entry patterns generally present in AI environments, that are characterised by massive file sequential entry, massive file random entry, and large small file entry. “Consider it like we’re extracting all the I/O capabilities on the GPU cluster itself, as an alternative of you having to purchase extra {hardware} for I/O,” Madan says.

Conceptually, Alluxio Enterprise AI works equally to the corporate’s current information analytics product–it accelerates the I/O and permits customers to get extra work completed with out worrying a lot about how the information will get from level A (the information lake) to level B (the compute cluster). However at a know-how stage, there was fairly a little bit of innovation required, Madan says.

“To co-locate on the GPUs, we needed to optimize on how a lot assets that we use,” he says. “Now we have to be actually useful resource environment friendly. As an alternative of as an example consuming 10 CPUs, we’ve got to convey it all the way down to solely consuming two CPUs on the GPU node to serve the I/O. So from a technical standpoint, there’s a very vital distinction there.”

Alluxio Enterprise AI can scale to greater than 10 billion objects, which can make it helpful for the numerous small recordsdata utilized in laptop imaginative and prescient use circumstances. It is designed to combine with PyTorch and Tensorflow frameworks. It is primarily meant for use for coaching AI fashions, however it may be used for mannequin deployment too.

The outcomes that Alluxio claims from the optimization are spectacular. On the GPU aspect, Alluxio Enterprise AI delivers 2x to 4x extra capability, the corporate says. Prospects can use that freed up capability to both get extra laptop imaginative and prescient coaching work completed or to chop their GPU prices, Madan says.

A few of Alluxio’s early testers used the brand new product in manufacturing settings that included 200 GPU servers. “It’s not a small funding,” Madan says. “Now we have some energetic engagement with smaller [customers running] a number of dozen. And we’ve got some engagements that are a lot bigger with a number of hundred servers, every of which value a number of hundred grand.”

One early tester is Zhihu, which runs a well-liked query and reply website from its headquarters in Beijing. “Utilizing Alluxio as the information entry layer, we’ve considerably enhanced mannequin coaching efficiency by 3x and deployment by 10x with GPU utilization doubled,” Mengyu Hu, a software program engineer in Zhihu’s information platform workforce, stated in a press launch. “We’re enthusiastic about Alluxio’s Enterprise AI and its new DORA structure supporting entry to large small recordsdata. This providing provides us confidence in supporting AI purposes dealing with the upcoming synthetic intelligence wave.”

This answer is just not for everybody, Madam says. Prospects which might be utilizing off-the-shelf AI fashions, maybe with a vector database for LLM use circumstances, don’t want this. “Should you’re adapting your mannequin [i.e. fine-tuning it] that is the place the necessity is,” he says.

Associated

/cdn.vox-cdn.com/uploads/chorus_asset/file/23932925/acastro_STK108__03.jpg)