Welcome insideBIGDATA AI Information Briefs Bulletin Board, our well timed new characteristic bringing you the newest trade insights and views surrounding the sector of AI together with deep studying, massive language fashions, generative AI, and transformers. We’re working tirelessly to dig up essentially the most well timed and curious tidbits underlying the day’s hottest applied sciences. We all know this discipline is advancing quickly and we need to convey you an everyday useful resource to maintain you knowledgeable and state-of-the-art. The information bites are continually being added in reverse date order (most up-to-date on prime). With our bulletin board you may test again usually to see what’s taking place in our quickly accelerating trade. Click on HERE to take a look at earlier “AI Information Briefs” round-ups.

[1/5/2024] OpenAI’s GPT Retailer launching subsequent week: OpenAI plans to launch its GPT Retailer subsequent week, after a delay from its preliminary November announcement majorly attributable to upheaval within the firm together with CEO Sam Altman’s momentary departure. This platform is strategically positioned as greater than only a market, however reasonably a major pivot for OpenAI, shifting from a mannequin supplier to a platform enabler.

The GPT Retailer will permit customers to share and monetize customized GPT fashions developed utilizing OpenAI’s superior GPT-4 framework. This transfer is critical in making AI extra accessible. Integral to this initiative, the GPT Builder software permits the creation of AI brokers for varied duties, eradicating the necessity for superior programming abilities. Alongside facet the GPT Retailer, OpenAI plans to implement a revenue-sharing mannequin primarily based on the utilization of those AI instruments. Moreover, a leaderboard will characteristic the most well-liked GPTs, and distinctive fashions might be highlighted in several classes.

[1/4/2024] OctoAI introduced the non-public preview of fine-tuned LLMs on the OctoAI Textual content Gen Answer. Early entry prospects can:

- Convey any fine-tuned Llama 2, Code Llama, or Mi(s/x)tral fashions to OctoAI, and

- Run them on the similar low per-token pricing and latency because the built-in Chat and Instruct fashions already accessible within the answer.

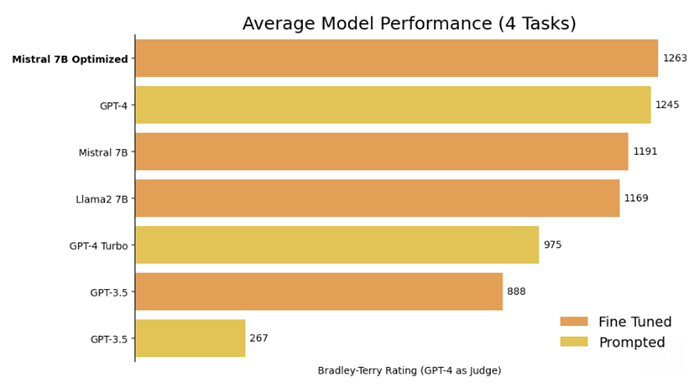

The corporate heard from prospects that fine-tuned LLMs are one of the simplest ways to create custom-made experiences of their functions, and that utilizing the best fine-tuned LLMs for a use case can outperform bigger options in high quality and value — because the determine beneath from OpenPipe reveals. Many additionally use fine-tuned variations of smaller LLMs to scale back their spending and dependence on OpenAI. However standard LLM serving platforms cost a “fine-tuning tax,” a premium of 2x or extra for inference APIs towards fine-tuned fashions

OctoAI delivers good unit economics for generative AI fashions, and these efficiencies lengthen to fine-tuned LLMs on OctoAI. Constructing on these, OctoAI provides one easy per-token worth for inferences towards a mannequin – whether or not it’s the built-in choice or your alternative of fine-tuned LLM.

[1/3/2024] Intel Corp. (Nasdaq: INTC) and DigitalBridge Group, Inc. (NYSE: DBRG), a worldwide funding agency, as we speak introduced the formation of Articul8 AI, Inc., an impartial firm providing enterprise prospects a full-stack, vertically-optimized and safe generative synthetic intelligence (GenAI) software program platform. The platform delivers AI capabilities that preserve buyer information, coaching and inference throughout the enterprise safety perimeter. The platform additionally gives prospects the selection of cloud, on-prem or hybrid deployment.

Articul8 provides a turnkey GenAI software program platform that delivers velocity, safety and cost-efficiency to assist massive enterprise prospects operationalize and scale AI. The platform was launched and optimized on Intel {hardware} architectures, together with Intel® Xeon® Scalable processors and Intel® Gaudi® accelerators, however will help a spread of hybrid infrastructure options.

“With its deep AI and HPC area data and enterprise-grade GenAI deployments, Articul8 is effectively positioned to ship tangible enterprise outcomes for Intel and our broader ecosystem of consumers and companions. As Intel accelerates AI in all places, we look ahead to our continued collaboration with Articul8,” mentioned Pat Gelsinger, Intel CEO.

[1/2/2024] GitHub repo spotlight: “Massive Language Mannequin Course” – an complete LLM course on GitHub paves the best way for experience in LLM know-how.

[1/2/2024] Sam Altman and Jony Ive recruit iPhone design chief to construct new AI system. Legendary designer Jony Ive, identified for his iconic work at Apple, and Sam Altman are collaborating on a brand new synthetic intelligence {hardware} challenge, enlisting former Apple government Tang Tan to work at Ive’s design agency, LoveFrom.

[1/2/2024] Chegg Experiencing “Demise by LLM”!?! Chegg started in 2005 as a disruptor, bringing on-line studying instruments to college students and reworking the panorama of schooling. However for the reason that firm inventory’s (NYSE: CHGG) peak in 2021, Chegg has taken a vital nostril dive of greater than 90% whereas going through competitors with the broadly accessible LLMs, e.g. ChatGPT that got here out on November 30, 2022. In August 2023, Chegg introduced a partnership with Scale AI to remodel their information right into a dynamic studying expertise for college kids after already collaborating with OpenAI on Cheggmate. A current Harvard Enterprise Overview outtake highlights the potential worth that Chegg’s specialised AI studying assistants could convey to a scholar’s studying expertise through the use of suggestions loops; instituting steady mannequin enchancment; and coaching the mannequin on proprietary datasets. The query stays nevertheless, can Chegg successfully affiliate their consumer information with AI to reclaim misplaced aggressive floor and benefit from new income streams, or is it combating a dropping battle towards the quickly evolving GenAI ecosystem? Personally, I’ve no love misplaced with Chegg, as I’ve found quite a few of my Intro to Information Science college students dishonest on homework assignments and exams by accessing my coursework uploaded to Chegg.

[1/2/2024] AI analysis paper spotlight: “Gemini: A Household of Extremely Succesful Multimodal Fashions,” the paper behind the brand new Google Gemini mannequin launch. The principle drawback addressed by Gemini is the problem of making fashions that may successfully perceive and course of a number of modalities (textual content, picture, audio, and video) whereas additionally delivering superior reasoning and understanding in every particular person area.

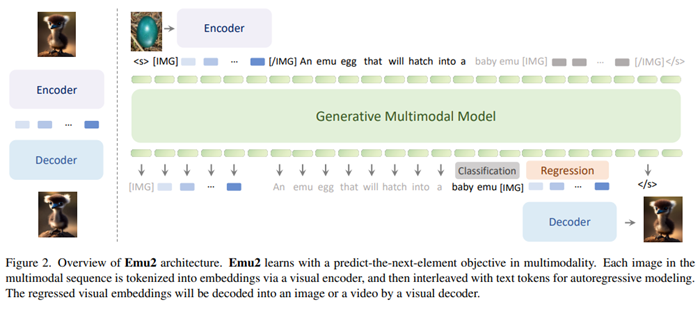

[1/2/2024] AI analysis paper spotlight: “Generative Multimodal Fashions are In-Context Learners.” This analysis demonstrates that giant multimodal fashions can improve their task-agnostic in-context studying capabilities by means of efficient scaling-up. The first drawback addressed is the wrestle of multimodal techniques to imitate the human capacity to simply remedy multimodal duties in context – with just a few demonstrations or easy directions. Emu2 is proposed, a brand new 37B generative multimodal mannequin, skilled on large-scale multimodal sequences with a unified autoregressive goal. Emu2 consists of a visible encoder/decoder, and a multimodal transformer. Photos are tokenized with the visible encoder to a steady embedding area, interleaved with textual content tokens for autoregressive modeling. Emu2 is initially pretrained solely on the captioning process with each image-text and video-text paired datasets. Emu2’s visible decoder is initialized from SDXL-base, and might be thought-about a visible detokenizer by means of a diffusion mannequin. VAE is saved static whereas the weights of a diffusion U-Web are up to date. Emu-chat is derived from Emu by fine-tuning the mannequin with conversational information, and Emu-gen is fine-tuned with complicated compositional technology duties. Outcomes of the analysis means that Emu2 achieves state-of-the-art few-shot efficiency on a number of visible question-answering datasets and demonstrates a efficiency enchancment with a rise within the variety of examples in context. Emu2 additionally learns to observe visible prompting in context, showcasing robust multimodal reasoning capabilities for duties within the wild. When instruction-tuned to observe particular directions, Emu2 additional achieves new benchmarks on difficult duties comparable to query answering for giant multimodal fashions and open-ended subject-driven technology.

Join the free insideBIGDATA publication.

Be part of us on Twitter: https://twitter.com/InsideBigData1

Be part of us on LinkedIn: https://www.linkedin.com/firm/insidebigdata/

Be part of us on Fb: https://www.fb.com/insideBIGDATANOW

/cdn.vox-cdn.com/uploads/chorus_asset/file/25588208/Megalopolis_Adam_Driver.png)