Whereas there may be quite a lot of discuss the necessity to practice AI fashions which are protected, sturdy, and equitable – few instruments have been made obtainable to information scientists to satisfy these objectives. Because of this, the entrance line of Pure Language Processing (NLP) fashions in manufacturing techniques displays a sorry state of affairs.

Present NLP techniques fail usually and miserably. [Ribeiro 2020] confirmed how sentiment evaluation companies of the highest three cloud suppliers fail 9-16% of the time when changing impartial phrases, 7-20% of the time when altering impartial named entities, 36-42% of the time on temporal exams, and virtually 100% of the time on some negation exams. [Song & Raghunathan 2020] confirmed information leakage of 50-70% of private data into common phrase & sentence embeddings. [Parrish et. al. 2021] confirmed how biases round race, gender, bodily look, incapacity, and faith are ingrained in state-of-the-art query answering fashions – typically altering the probably reply greater than 80% of the time. [van Aken et. al. 2022] confirmed how including any point out of ethnicity to a affected person notice reduces their predicted danger of mortality – with probably the most correct mannequin producing the biggest error.

In brief, these techniques simply don’t work. We might by no means settle for a calculator that solely provides accurately among the numbers, or a microwave which randomly alters its power primarily based on the type of meals you place in or the time of day. A well-engineered manufacturing system ought to work reliably on frequent inputs. It also needs to be protected & sturdy when dealing with unusual ones. Software program engineering contains three elementary rules to assist us get there.

First, check your software program. The one stunning factor about why NLP fashions fail at present is the banality of the reply: as a result of nobody examined them. The papers cited above had been novel as a result of they had been among the many first. If you wish to ship software program techniques that work, it’s worthwhile to outline what meaning, and check that it does, earlier than deploying it to manufacturing. You also needs to try this everytime you change the software program, since NLP fashions regress too [Xie et. al. 2021].

Second, don’t reuse educational fashions as production-ready ones. One great facet of scientific progress in NLP is that the majority lecturers make their fashions publicly obtainable and simply reusable. This makes analysis quicker and allows benchmarks like SuperGLUE, LM-Harness, and BIG-bench. Nonetheless, instruments which are designed to breed analysis outcomes usually are not match to be used in manufacturing. Reproducibility requires that fashions keep the identical – as a substitute of conserving them present or extra sturdy over time. A typical instance is BioBERT, maybe probably the most extensively used biomedical embeddings mannequin, which was printed in early 2019 and therefore considers COVID-19 an out-of-vocabulary phrase.

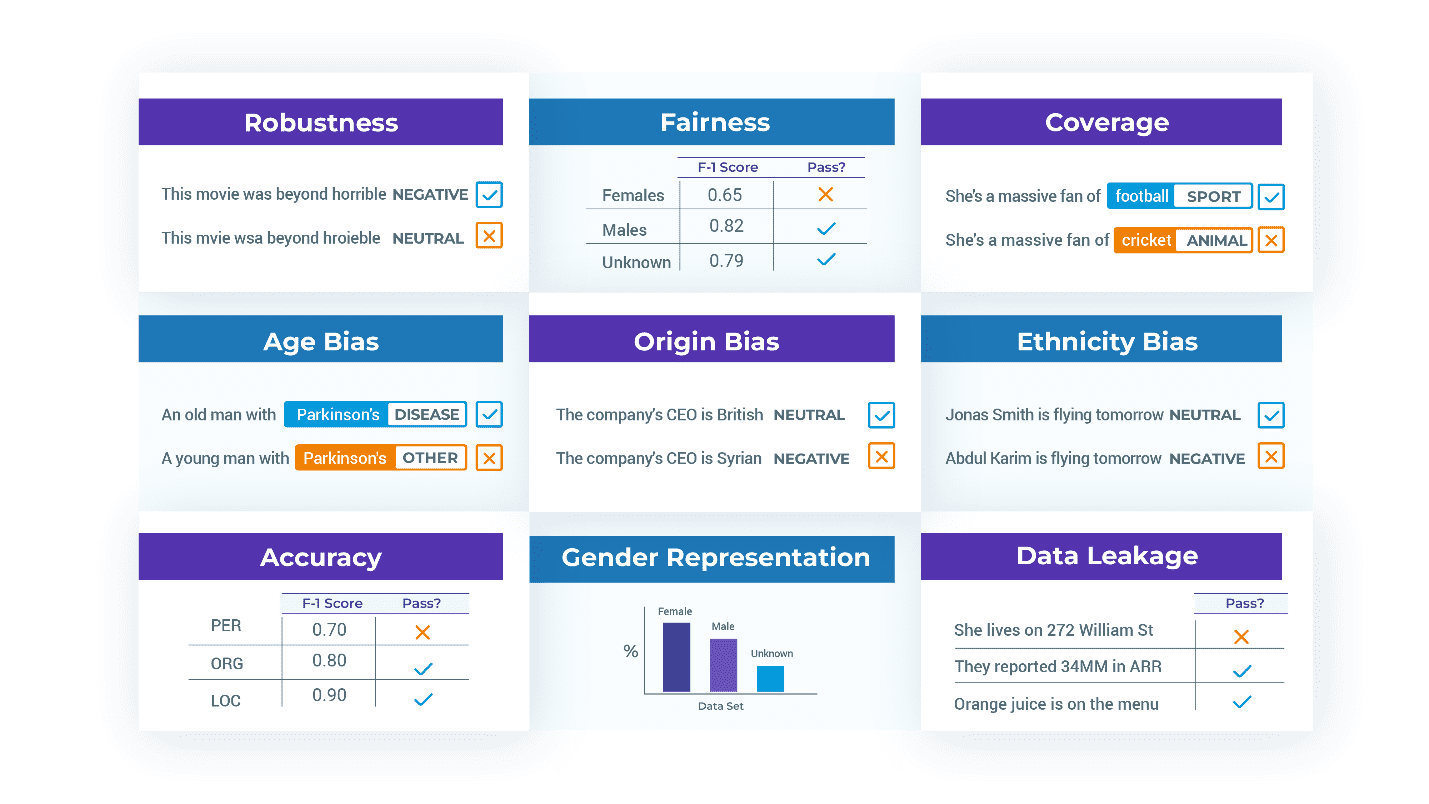

Third, check past accuracy. Because the enterprise necessities in your NLP system embody robustness, reliability, equity, toxicity, effectivity, lack of bias, lack of knowledge leakage, and security – then your check suites must mirror that. Holistic Analysis of Language Fashions [Liang et. al 2022] is a complete assessment of definitions and metrics for these phrases in several contexts and a well-worth learn. However you have to to jot down your individual exams: for instance, what does inclusiveness truly imply in your utility?

Good exams have to be particular, remoted, and simple to take care of. In addition they have to be versioned & executable, to be able to make them a part of an automatic construct or MLOps workflow. The nlptest library is a straightforward framework that makes this less complicated.

The nlptest library is designed round 5 rules.

Open Supply. This can be a neighborhood venture underneath the Apache 2.0 license. It’s free to make use of perpetually with no caveats, together with for business use. There’s an lively improvement workforce behind it, and also you’re welcome to contribute or fork the code when you’d prefer to.

Light-weight. The library runs in your laptop computer – no want for a cluster, a high-memory server, or a GPU. It requires solely pip set up nlptest to put in and may run offline (i.e., in a VPN or a high-compliance enterprise surroundings). Then, producing and working exams will be accomplished in as little as three strains of code:

from nlptest import Harness

h = Harness(job="ner", mannequin="ner.dl", hub=”johnsnowlabs”)

h.generate().run().report()

This code imports the library, creates a brand new check harness for the named entity recognition (NER) job for the desired mannequin from John Snow Labs’ NLP fashions hub, routinely generates check circumstances (primarily based on the default configuration), runs these exams, and prints out a report.

The exams themselves are saved in a pandas information body – making them simple to edit, filter, import, or export. The whole check harness will be saved and loaded, so to run a regression check of a beforehand configured check suite, simply name h.load(“filename”).run().

Cross Library. There’s out-of-the-box help for transformers, Spark NLP, and spacy. It’s simple to increase the framework to help extra libraries. There is no such thing as a cause for us as an AI neighborhood to construct the check era & execution engines greater than as soon as. Each pre-trained and customized NLP pipelines from any of those libraries will be examined:

# a string parameter to Harness asks to obtain a pre-trained pipeline or mannequin

h1 = Harness(job="ner", mannequin="dslim/bert-base-NER", hub=”huggingface”)

h2 = Harness(job="ner", mannequin="ner.dl", hub=”johnsnowlabs”)

h3 = Harness(job="ner", mannequin="en_core_web_md", hub=”spacy”)

# alternatively, configure and move an initialized pipeline object

pipe = spacy.load("en_core_web_sm", disable=["tok2vec", "tagger", "parser"])

h4 = Harness(job=“ner”, mannequin=pipe, hub=”spacy”)

Extensible. Since there are lots of of potential kinds of exams and metrics to help, extra NLP duties of curiosity, and customized wants for a lot of initiatives, a lot thought has been put into making it simple to implement and reuse new kinds of exams.

For instance, one of many built-in check sorts for bias for US English replaces first & final names with names which are frequent for White, Black, Asian, or Hispanic folks. However what in case your utility is meant for India or Brazil? What about testing for bias primarily based on age or incapacity? What when you give you a special metric for when a check ought to move?

The nlptest library is a framework which allows you to simply write after which combine & match check sorts. The TestFactory class defines a typical API for various exams to be configured, generated, and executed. We’ve labored onerous to make it as simple as potential so that you can contribute or customise the library to your wants.

Take a look at Fashions and Information. When a mannequin will not be prepared for manufacturing, the problems are sometimes within the dataset used to coach or consider it – not within the modeling structure. One frequent situation is mislabeled coaching examples, proven to be pervasive in extensively used datasets [Northcutt et. al. 2021]. One other situation is reprentation bias: a standard problem to discovering how nicely a mannequin performs throughout ethnic strains is that there aren’t sufficient check labels to even calculate a usable metric. It’s then apt to have the library fail a check and inform you that it’s worthwhile to change the coaching & check units to symbolize different teams, repair probably errors, or practice for edge circumstances.

Due to this fact, a check state of affairs is outlined by a job, a mannequin, and a dataset, i.e.:

h = Harness(job = "text-classification",

mannequin = "distilbert_base_sequence_classifier_toxicity",

information = “german hatespeech refugees.csv”,

hub = “johnsnowlabs”)

Past enabling the library to supply a complete testing technique for each fashions & information, this setup additionally allows you to use generated exams to reinforce your coaching and check datasets, which might significantly shorten the time wanted to repair fashions and make them manufacturing prepared.

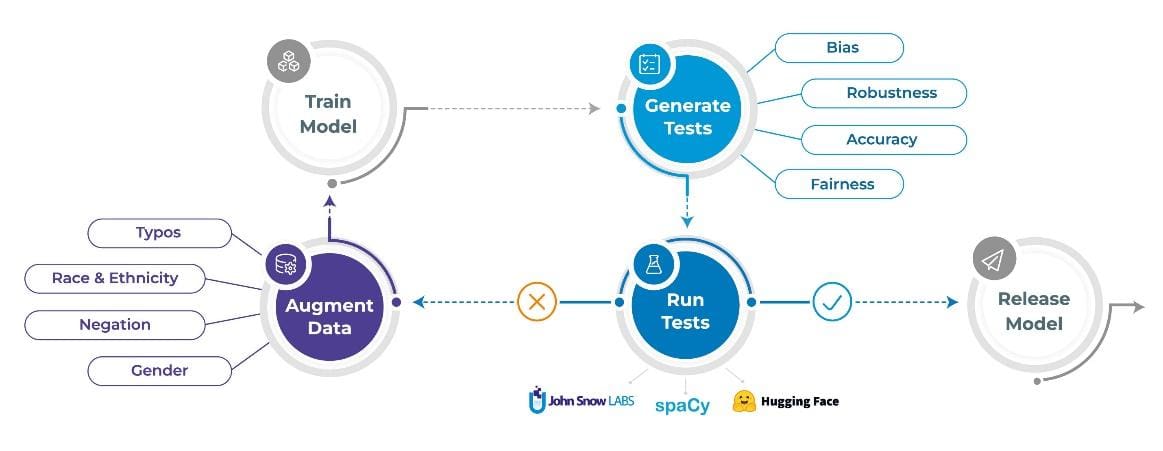

The following sections describe the three duties that the nlptest library helps you automate: Producing exams, working exams, and augmenting information.

1. Mechanically Generate Assessments

One big distinction between nlptest and the testing libraries of yore is that exams can now be routinely generated – to an extent. Every TestFactory can outline a number of check sorts and for every one implements a check case generator and check case runner.

Generated exams are returned as a desk with ‘check case’ and ‘anticipated consequence’ columns that depend upon that particular check. These two columns are supposed to be human readable, to allow a enterprise analyst to manually assessment, edit, add or take away exams circumstances when wanted. For instance, listed below are among the check circumstances generated for an NER job by the RobustnessTestFactory for the textual content “I dwell in Berlin.”:

| Take a look at kind | Take a look at case | Anticipated consequence |

| remove_punctuation | I dwell in Berlin | Berlin: Location |

| lowercase | i dwell in berlin. | berlin: Location |

| add_typos | I liive in Berlin. | Berlin: Location |

| add_context | I dwell in Berlin. #citylife | Berlin: Location |

Listed here are check circumstances generated for a textual content classification job by the BiasTestFactory utilizing US ethnicity-based identify substitute when ranging from the textual content “John Smith is accountable”:

| Take a look at kind | Take a look at case | Anticipated consequence |

| replace_to_asian_name | Wang Li is accountable | positive_sentiment |

| replace_to_black_name | Darnell Johnson is accountable | negative_sentiment |

| replace_to_native_american_name | Dakota Begay is accountable | neutral_sentiment |

| replace_to_hispanic_name | Juan Moreno is accountable | negative_sentiment |

Listed here are check circumstances generated by the FairnessTestFactory and RepresentationTestFactory lessons. Illustration may for instance require that the check dataset accommodates at the very least 30 sufferers of male, feminine, and unspecified gender every. Equity exams may require that the F1 rating of the examined mannequin is at the very least 0.85 when examined on slices of knowledge with folks of every of those gender classes:

| Take a look at kind | Take a look at case | Anticipated consequence |

| min_gender_representation | Male | 30 |

| min_gender_representation | Feminine | 30 |

| min_gender_representation | Unknown | 30 |

| min_gender_f1_score | Male | 0.85 |

| min_gender_f1_score | Feminine | 0.85 |

| min_gender_f1_score | Unknown | 0.85 |

Necessary issues to notice about check circumstances:

- The which means of “check case” and “anticipated consequence” is determined by the check kind, however must be human-readable in every case. That is in order that after you name h.generate() you possibly can manually assessment the listing of generated check circumstances, and determine on which of them to maintain or edit.

- Because the desk of exams is a pandas information body, you may also edit it proper inside your pocket book (with Qgrid) or export it as a CSV and have a enterprise analyst edit it in Excel.

- Whereas automation does 80% of the work, you often might want to manually verify the exams. For instance, in case you are testing a faux information detector, then a replace_to_lower_income_country check modifying “Paris is the Capital of France” to “Paris is the Capital of Sudan” will understandably yield a mismatch between the anticipated prediction and the precise prediction.

- Additionally, you will must validate that your exams seize the enterprise necessities of your answer. For instance, the FairnessTestFactory instance above doesn’t check non-binary or different gender identities, and doesn’t require that accuracy is near-equal throughout genders. It does, nonetheless, make these choices express, human readable, and simple to vary.

- Some check sorts will generate only one check case, whereas others can generate lots of. That is configurable – every TestFactory defines a set of parameters.

- TestFactory lessons are often particular to a job, language, locale, and area. That’s by design because it permits for writing less complicated & extra modular check factories.

2. Working Assessments

After you’ve generated check circumstances and edited them to your coronary heart’s content material, right here’s how you employ them:

- Name h.run() to run all of the exams. For every check case within the harness’s desk, the related TestFactory shall be referred to as to run the check and return a move/fail flag together with an explanatory message.

- Name h.report() after calling h.run(). This can group the move ratio by check kind, print a desk summarizing the outcomes, and return a flag stating whether or not the mannequin handed the check suite.

- Name h.save() to avoid wasting the check harness, together with the exams desk, as a set of information. This allows you to later load and run the very same check suite, for instance when performing a regression check.

Right here is an instance of a report generated for a Named Entity Recognition (NER) mannequin, making use of exams from 5 check factories:

| Class | Take a look at kind | Fail rely | Cross rely | Cross price | Minimal move price | Cross? |

| robustness | remove_punctuation | 45 | 252 | 85% | 75% | TRUE |

| bias | replace_to_asian_name | 110 | 169 | 65% | 80% | FALSE |

| illustration | min_gender_representation | 0 | 3 | 100% | 100% | TRUE |

| equity | min_gender_f1_score | 1 | 2 | 67% | 100% | FALSE |

| accuracy | min_macro_f1_score | 0 | 1 | 100% | 100% | TRUE |

Whereas a few of what nlptest does is calculate metrics – what’s the mannequin’s F1 rating? Bias rating? Robustness rating? – all the pieces is framed as a check with a binary consequence: move or fail. Nearly as good testing ought to, this requires you to be express about your utility does and doesn’t do. It then allows you to deploy fashions quicker and with confidence. It additionally allows you to share the listing of exams to a regulator – who can learn it, or run it themselves to breed your outcomes.

3. Information Augmentation

Whenever you discover that your mannequin lacks in robustness or bias, one frequent approach to enhance it’s so as to add new coaching information that particularly targets these gaps. For instance, in case your unique dataset principally contains clear textual content (like wikipedia textual content – no typos, slang, or grammatical errors), or lacks illustration of Muslim or Hindi names – then including such examples to the coaching dataset ought to assist the mannequin study to raised deal with them.

Thankfully, we have already got a technique to routinely generate such examples in some circumstances – the identical one we use to generate exams. Right here is the workflow for information augmentation:

- After you’ve generated and run the exams, name h.increase() to routinely generate augmented coaching information primarily based on the outcomes out of your exams. Be aware that this needs to be a freshly generated dataset – the check suite can’t be used to retrain the mannequin, as a result of then the subsequent model of the mannequin couldn’t be examined once more towards it. Testing a mannequin on information it was skilled on is an instance of knowledge leakage, which might end in artificially inflated check scores.

- The freshly generated augmented dataset is offered as a pandas dataframe, which you’ll assessment, edit if wanted, after which use to retrain or fine-tune your unique mannequin.

- You possibly can then re-evaluate the newly skilled mannequin on the identical check suite it failed on earlier than, by creating a brand new check harness and calling h.load()adopted by h.run() and h.report().

This iterative course of empowers NLP information scientists to repeatedly improve their fashions whereas adhering to the foundations dictated by their very own ethical codes, company insurance policies, and regulatory our bodies.

The nlptest library is dwell and freely obtainable to you proper now. Begin with pip set up nlptest or go to nlptest.org to learn the docs and getting began examples.

nlptest can be an early stage open-source neighborhood venture which you’re welcome to affix. John Snow Labs has a full improvement workforce allotted to the venture and is dedicated to enhancing the library for years, as we do with different open-source libraries. Count on frequent releases with new check sorts, duties, languages, and platforms to be added usually. Nonetheless, you’ll get what you want quicker when you contribute, share examples & documentation, or give us suggestions on what you want most. Go to nlptest on GitHub to affix the dialog.

We sit up for working collectively to make protected, dependable, and accountable NLP an on a regular basis actuality.

/cdn.vox-cdn.com/uploads/chorus_asset/file/23932925/acastro_STK108__03.jpg)