Welcome to the Stanford NLP Studying Group Weblog! Impressed by different teams, notably the UC Irvine NLP Group, now we have determined to weblog in regards to the papers we learn at our studying group.

On this first submit, we’ll talk about the next paper:

Kuncoro et al. “LSTMs Can Be taught Syntax-Delicate Dependencies Nicely, However Modeling Construction Makes Them Higher.” ACL 2018.

This paper builds upon the sooner work of Linzen et al.:

Linzen et al. “Assessing the Skill of LSTMs to Be taught Syntax-Delicate Dependencies.” TACL 2016.

Each papers handle the query, “Do neural language fashions truly be taught to mannequin syntax?” As we’ll see, the reply is sure, even for fashions like LSTMs that don’t explicitly characterize syntactic relationships. Furthermore, fashions like RNN Grammars, which construct representations based mostly on syntactic construction, fare even higher.

First, we should resolve methods to measure whether or not a language mannequin has “discovered to mannequin syntax.” Linzen et al. suggest utilizing subject-verb quantity settlement to quantify this. Take into account the next 4 sentences:

- The secret is on the desk

- * The key are on the desk

- * The keys is on the desk

- The keys are on the desk

Sentences 2 and three are invalid as a result of the topic (“key”https://nlp.stanford.edu/”keys”) disagree with the verb (“are”https://nlp.stanford.edu/”is”) in quantity (singular/plural). Due to this fact, a very good language mannequin ought to give increased chance to sentences 1 and 4.

For these easy sentences, a easy heuristic can predict whether or not the singular or plural type of the verb is most well-liked (e.g., discover the closest noun to the left of the verb, and verify whether it is singular or plural). Nonetheless, this heuristic fails on extra complicated sentences. For instance, take into account:

The keys to the cupboard are on the desk.

Right here we should use the plural verb, despite the fact that the closest noun (“cupboard”) is singular. What issues right here will not be linear distance within the sentence, however syntactic distance: “are” and “keys” have a direct syntactic relationship (specifically an nsubj arc). Typically there could also be many intervening nouns between the topic and verb (“The keys to the cupboard within the room subsequent to the kitchen…”), making predicting the proper verb kind very difficult. That is the important thing concept of Linzen et al.: we will measure whether or not a language mannequin has discovered about syntax by asking, How properly does the language mannequin predict the proper verb kind on sentences the place linear distance is a nasty heuristic?

Notice how handy it’s that this syntax-sensitive dependency exists in English: it permits us to attract conclusions about syntactic consciousness of fashions that solely make word-level predictions. Sadly, the draw back is that this method is proscribed to sure sorts of syntactic relationships. We would additionally wish to see if language fashions can accurately predict the place a prepositional phrase attaches, for instance, however there isn’t a analogue of quantity settlement involving prepositional phrases, so we can not develop a similar take a look at.

Linzen et al. discovered that LSTM language fashions should not excellent at predicting the proper verb kind, in instances when linear distance is unhelpful. On a big take a look at set of sentences from English Wikipedia, they measure how usually the language mannequin prefers to generate the verb with the proper kind (“are”, within the above instance) over the verb with the mistaken kind (“is”). The language mannequin is taken into account appropriate if

P(“are” | “The keys to the cupboard”) > P(“is” | “The keys to the cupboard”).

This can be a pure alternative, though one other chance is to let the language mannequin see the whole sentence earlier than predicting. On this regime, the mannequin could be thought-about appropriate if

P(“The keys to the cupboard are on the desk”) > P(“The keys to the cupboard is on the desk”).

Right here, the mannequin will get to make use of each the left and proper context when deciding the proper verb. This places it on equal footing with, for instance, a syntactic parser, which might take a look at the whole sentence and generate a full parse tree. Alternatively, you could possibly argue that as a result of LSTMs generate from left to proper, no matter is on the precise hand aspect is irrelevant as to whether it generates the proper verb throughout era.

Utilizing the “left context solely” definition of correctness, Linzen et al. discover that the language mannequin does okay on common, nevertheless it struggles on sentences wherein there are nouns between the topic and verb with the other quantity as the topic (resembling “cupboard” within the earlier instance). The authors refer to those nouns as attractors. The language mannequin does fairly properly (7% error) when there aren’t any attractors, however this jumps to 33% error on sentences with one attractor, and a whopping 70% error (worse than likelihood!) on very difficult sentences with 4 attractors. In distinction, an LSTM skilled particularly to foretell whether or not an upcoming verb is singular or plural is a lot better, with solely 18% error when 4 attractors are current. Linzen et al. conclude that whereas the LSTM structure can find out about these long-range syntactic cues, the language modeling goal forces it to spend so much of mannequin capability on different issues, leading to a lot worse error charges on difficult instances.

Nonetheless, Kuncoro et al. re-examine these conclusions, and discover that with cautious hyperparameter tuning and extra parameters, an LSTM language mannequin can truly do so much higher. They use a 350-dimensional hidden state (versus 50-dimensional from Linzen et al.) and are in a position to get 1.3% error with 0 attractors, 3.0% error with 1 attractor, and 13.8% error with 4 attractors. By scaling up LSTM language fashions, it appears we will get them to be taught qualitatively various things about language! This jives with the work of Melis et al., who discovered that cautious hyperparameter tuning makes commonplace LSTM language fashions outperform many fancier fashions.

Subsequent, Kuncoro et al. look at variants of the usual LSTM word-level language mannequin. A few of their findings embody:

- A language mannequin skilled on a unique dataset (1 Billion phrase benchmark, which is generally information as an alternative of Wikipedia) does barely worse throughout the board, however nonetheless learns some syntax (20% error with 4 attractors)

- A personality-level language mannequin does about the identical with 0 attractors, however is worse than the word-level mannequin as extra attractors are added (6% error versus 3% with 1 attractor; 27.8% error versus 13.8% with 4 attractors). When many attractors are current, the topic is very distant from the verb when it comes to variety of characters, so the character-level mannequin struggles.

However crucial query Kuncoro et al. ask is whether or not incorporating syntactic data throughout coaching can truly enhance language mannequin efficiency at this subject-verb settlement activity. As a management, they first strive preserving the neural structure the identical (nonetheless an LSTM) however change the coaching knowledge in order that the mannequin is attempting to generate not solely phrases in a sentence but additionally the corresponding constituency parse tree. They do that by linearizing the parse tree through a depth-first pre-order traversal, so {that a} tree like

turns into a sequence of tokens like

["(S", "(NP", "(NP", "The", "keys", ")NP", "(PP", "to", "(NP", ...]

The LSTM is skilled identical to a language mannequin to foretell sequences of tokens like these. At take a look at time, the mannequin will get the entire prefix, consisting of each phrases and parse tree symbols, and predicts what verb comes subsequent. In different phrases, it computes

P(“are” | “(S (NP (NP The keys )NP (PP to (NP the desk )NP )PP )NP (VP”).

It’s possible you’ll be questioning the place the parse tree tokens come from, because the datsaet is only a bunch of sentences from Wikipedia with no related gold-labeled parse timber. The parse timber had been generated with an off-the-shelf parser. The parser will get to take a look at the entire sentence earlier than predicting a parse tree, which technically leaks details about the phrases to the precise of the verb–we’ll come again to this concern in slightly bit.

Kuncoro et al. discover {that a} plain LSTM skilled on sequences of tokens like this doesn’t do any higher than the unique LSTM language mannequin. Altering the info alone doesn’t appear to pressure the mannequin to truly get higher at modeling these syntax-sensitive dependencies.

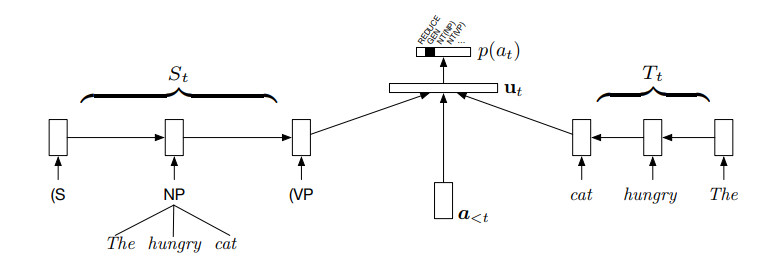

Subsequent, the authors moreover change the mannequin structure, changing the LSTM with an RNN Grammar. Just like the LSTM that predicts the linearized parse tree tokens, the RNN Grammar additionally defines a joint chance distribution over sentences and their parse timber. However not like the LSTM, the RNN Grammar makes use of the tree construction of phrases seen thus far to construct representations of constituents compositionally. The determine under exhibits the RNN Grammar structure:

On the left is the stack, consisting of all constituents which have both been opened or absolutely created. The embedding for a accomplished constituent (“The hungry cat”) is created by composing the embeddings for its kids, through a neural community. An RNN then runs over the stack to generate an embedding of the present stack state. This, together with a illustration of the historical past of previous parsing actions (a_{<t}) is used to foretell the following parsing motion (i.e. to generate a brand new constituent, full an current one, or generate a brand new phrase). The RNN Grammar variant utilized by Kuncoro et al. ablates the “buffer” (T_t) on the precise aspect of the determine.

The compositional construction of the RNN Grammar implies that it’s naturally inspired to summarize a constituent based mostly on phrases which might be nearer to the top-level, reasonably than phrases which might be nested many ranges deep. In our operating instance, “keys” is nearer to the highest degree of the primary NP, whereas “cupboard” is nested inside a prepositional phrase, so we anticipate the RNN Grammar to lean extra closely on “keys” when constructing a illustration of the primary NP. That is precisely what we would like with a purpose to predict the proper verb kind! Empirically, this inductive bias in direction of utilizing syntactic distance helps with the subject-verb settlement activity: the RNN Grammar will get solely 9.4% error on sentences with 4 attractors. Utilizing syntactic data at coaching time does make language fashions higher at predicting syntax-sensitive dependencies, however provided that the mannequin structure makes sensible use of the accessible tree construction.

As talked about earlier, one vital caveat is that the RNN Grammar will get to make use of the anticipated parse tree from an exterior parser. What if the anticipated parse of the prefix leaks details about the proper verb? Furthermore, reliance on an exterior parser additionally leaks data from one other mannequin, so it’s unclear whether or not the RNN Grammar itself has actually “discovered” about these syntactic relationships. Kuncoro et al. handle these objections by re-running the experiments utilizing a predicted parse of the prefix generated by the RNN Grammar itself. They use a beam search methodology proposed by Fried et al. to estimate the almost certainly parse tree construction, in line with the RNN Grammar, for the phrases earlier than the verb. This predicted parse tree fragment is then utilized by the RNN Grammar to foretell what the verb must be, as an alternative of the tree generated by a separate parser. The RNN Grammar nonetheless does properly on this setting; actually, it does considerably higher (7.1% error with 4 attractors current). In brief, the RNN Grammar does higher than the LSTM baselines at predicting the proper verb, and it does so by first predicting the tree construction of the phrases earlier than the verb, then utilizing this tree construction to foretell the verb itself.

(Notice: a earlier model of this submit incorrectly claimed that the above experiments used a separate incremental parser to parse the prefix.)

Neural language fashions with ample capability can be taught to seize long-range syntactic dependencies. That is true even for very generic mannequin architectures like LSTMs, although fashions that explicitly mannequin syntactic construction to kind their inner representations are even higher. We had been in a position to quantify this by leveraging a selected sort of syntax-sensitive dependency (subject-verb quantity settlement), and specializing in uncommon and difficult instances (sentences with a number of attractors), reasonably than the typical case which will be solved heuristically.

There are a lot of particulars I’ve omitted, resembling a dialogue in Kuncoro et al. of different RNN Grammar configurations. Linzen et al. additionally discover different coaching targets in addition to simply language modeling.

For those who’ve gotten this far, you may additionally take pleasure in these extremely associated papers:

- Gulordava et al. “Colorless inexperienced recurrent networks dream hierarchically.” NAACL 2018. This paper truly got here out a bit earlier than Kuncoro et al., and has related findings relating to LSTM measurement. However the primary level of this paper is to find out whether or not the LSTM is definitely studying syntax, or whether it is utilizing collocational/frequency-based data. For instance, given “canines within the neighborhood usually bark/barks,” figuring out that barking is one thing that canines can do however neighborhoods cannot is ample to guess the proper kind. To check this, they assemble a brand new take a look at set the place content material phrases are changed with different content material phrases of the identical sort, leading to nonce sentences with equal syntax. The LSTM language fashions do considerably worse with this knowledge however nonetheless fairly properly, once more suggesting that they do find out about syntax.

- Yoav Goldberg. “Assessing BERT’s Syntactic Skills”. With the current success of BERT, a pure query is whether or not BERT learns these identical kinds of syntactic relationships. Impressively, it does very properly on the verb prediction activity, getting 3-4% error charges throughout the board for 1, 2, 3, or 4 attractors. It is value noting that for numerous causes, these numbers should not immediately comparable with the numbers in the remainder of the submit (each attributable to BERT seeing the entire sentence and for knowledge processing causes).

/cdn.vox-cdn.com/uploads/chorus_asset/file/25520051/NASCAR_EV_car.jpg)