Reinforcement studying supplies a conceptual framework for autonomous brokers to be taught from expertise, analogously to how one may practice a pet with treats. However sensible purposes of reinforcement studying are sometimes removed from pure: as an alternative of utilizing RL to be taught via trial and error by really trying the specified activity, typical RL purposes use a separate (normally simulated) coaching section. For instance, AlphaGo didn’t be taught to play Go by competing towards 1000’s of people, however reasonably by enjoying towards itself in simulation. Whereas this sort of simulated coaching is interesting for video games the place the principles are completely identified, making use of this to actual world domains akin to robotics can require a variety of complicated approaches, akin to using simulated knowledge, or instrumenting real-world environments in numerous methods to make coaching possible below laboratory circumstances. Can we as an alternative devise reinforcement studying methods for robots that permit them to be taught straight “on-the-job”, whereas performing the duty that they’re required to do? On this weblog publish, we are going to talk about ReLMM, a system that we developed that learns to scrub up a room straight with an actual robotic through continuous studying.

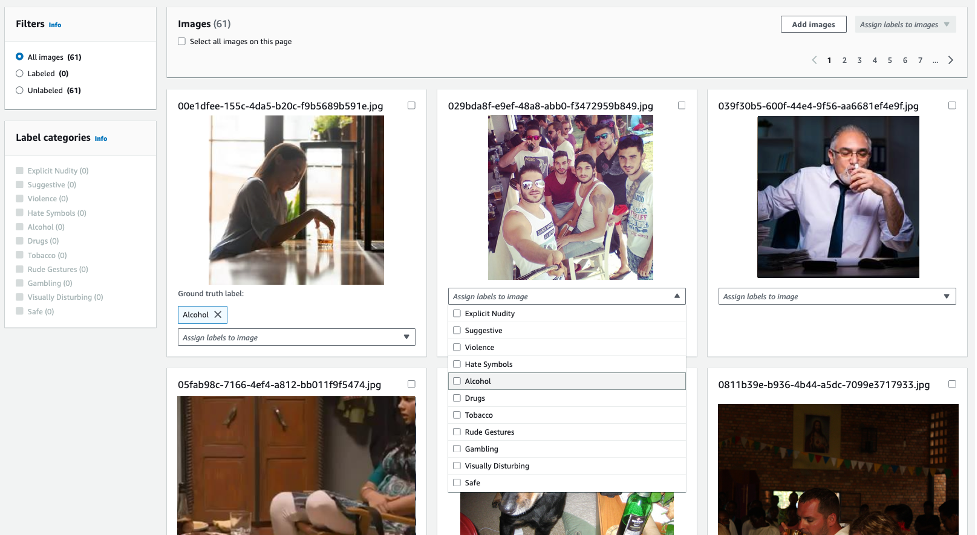

We consider our methodology on totally different duties that vary in issue. The highest-left activity has uniform white blobs to pickup with no obstacles, whereas different rooms have objects of various shapes and colours, obstacles that enhance navigation issue and obscure the objects and patterned rugs that make it troublesome to see the objects towards the bottom.

To allow “on-the-job” coaching in the actual world, the problem of amassing extra expertise is prohibitive. If we will make coaching in the actual world simpler, by making the information gathering course of extra autonomous with out requiring human monitoring or intervention, we will additional profit from the simplicity of brokers that be taught from expertise. On this work, we design an “on-the-job” cell robotic coaching system for cleansing by studying to know objects all through totally different rooms.

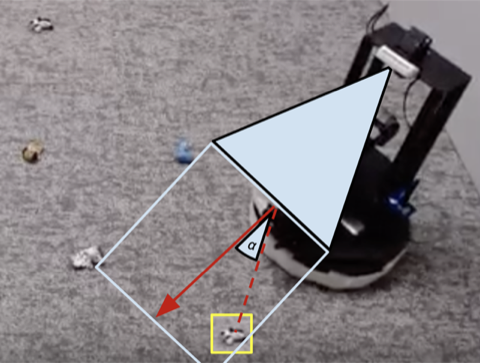

Individuals are not born sooner or later and performing job interviews the subsequent. There are numerous ranges of duties individuals be taught earlier than they apply for a job as we begin with the better ones and construct on them. In ReLMM, we make use of this idea by permitting robots to coach common-reusable abilities, akin to greedy, by first encouraging the robotic to prioritize coaching these abilities earlier than studying later abilities, akin to navigation. Studying on this trend has two benefits for robotics. The primary benefit is that when an agent focuses on studying a ability, it’s extra environment friendly at amassing knowledge across the native state distribution for that ability.

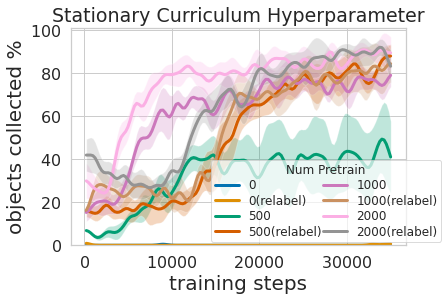

That’s proven within the determine above, the place we evaluated the quantity of prioritized greedy expertise wanted to end in environment friendly cell manipulation coaching. The second benefit to a multi-level studying strategy is that we will examine the fashions skilled for various duties and ask them questions, akin to, “are you able to grasp something proper now” which is useful for navigation coaching that we describe subsequent.

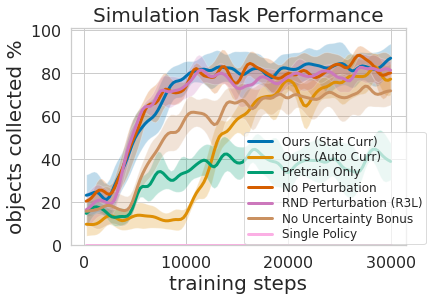

Coaching this multi-level coverage was not solely extra environment friendly than studying each abilities on the identical time but it surely allowed for the greedy controller to tell the navigation coverage. Having a mannequin that estimates the uncertainty in its grasp success (Ours above) can be utilized to enhance navigation exploration by skipping areas with out graspable objects, in distinction to No Uncertainty Bonus which doesn’t use this data. The mannequin may also be used to relabel knowledge throughout coaching in order that within the unfortunate case when the greedy mannequin was unsuccessful making an attempt to know an object inside its attain, the greedy coverage can nonetheless present some sign by indicating that an object was there however the greedy coverage has not but realized methods to grasp it. Furthermore, studying modular fashions has engineering advantages. Modular coaching permits for reusing abilities which can be simpler to be taught and might allow constructing clever methods one piece at a time. That is helpful for a lot of causes, together with security analysis and understanding.

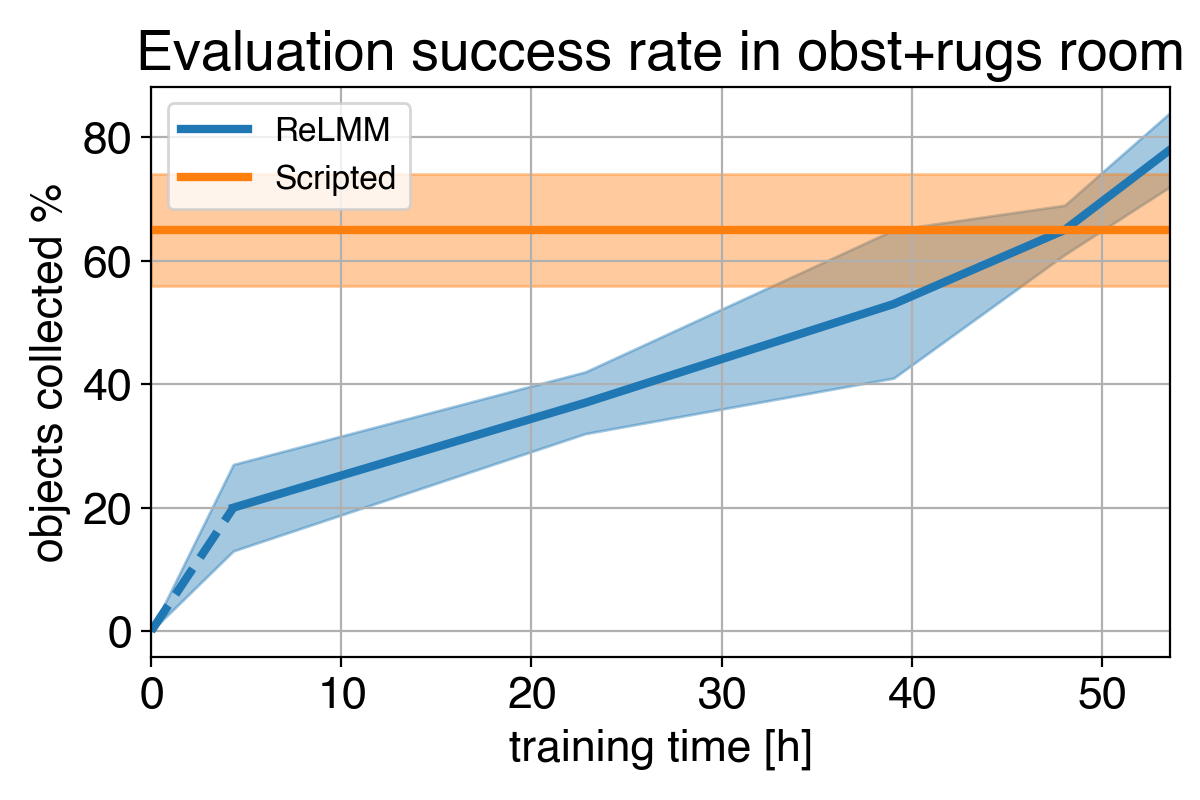

Many robotics duties that we see right this moment may be solved to various ranges of success utilizing hand-engineered controllers. For our room cleansing activity, we designed a hand-engineered controller that locates objects utilizing picture clustering and turns in the direction of the closest detected object at every step. This expertly designed controller performs very nicely on the visually salient balled socks and takes affordable paths across the obstacles but it surely cannot be taught an optimum path to gather the objects shortly, and it struggles with visually various rooms. As proven in video 3 under, the scripted coverage will get distracted by the white patterned carpet whereas making an attempt to find extra white objects to know.

1)

2)

3)

4)

We present a comparability between (1) our coverage firstly of coaching (2) our coverage on the finish of coaching (3) the scripted coverage. In (4) we will see the robotic’s efficiency enhance over time, and finally exceed the scripted coverage at shortly amassing the objects within the room.

Given we will use consultants to code this hand-engineered controller, what’s the goal of studying? An necessary limitation of hand-engineered controllers is that they’re tuned for a specific activity, for instance, greedy white objects. When various objects are launched, which differ in coloration and form, the unique tuning could not be optimum. Reasonably than requiring additional hand-engineering, our learning-based methodology is ready to adapt itself to varied duties by amassing its personal expertise.

Nevertheless, crucial lesson is that even when the hand-engineered controller is succesful, the training agent finally surpasses it given sufficient time. This studying course of is itself autonomous and takes place whereas the robotic is performing its job, making it comparatively cheap. This reveals the potential of studying brokers, which may also be considered figuring out a normal solution to carry out an “skilled guide tuning” course of for any type of activity. Studying methods have the flexibility to create the whole management algorithm for the robotic, and usually are not restricted to tuning a couple of parameters in a script. The important thing step on this work permits these real-world studying methods to autonomously acquire the information wanted to allow the success of studying strategies.

This publish relies on the paper “Totally Autonomous Actual-World Reinforcement Studying with Purposes to Cellular Manipulation”, introduced at CoRL 2021. You could find extra particulars in our paper, on our web site and the on the video. We offer code to breed our experiments. We thank Sergey Levine for his priceless suggestions on this weblog publish.

/cdn.vox-cdn.com/uploads/chorus_asset/file/23932925/acastro_STK108__03.jpg)