Developments in AI have led to proficient programs that make unclear selections, elevating issues about deploying untrustworthy AI in every day life and the financial system. Understanding neural networks is important for belief, moral issues like algorithmic bias, and scientific functions requiring mannequin validation. Multilayer perceptrons (MLPs) are extensively used however lack interpretability in comparison with consideration layers. Mannequin renovation goals to reinforce interpretability with specifically designed elements. Primarily based on the Kolmogorov-Arnold Networks (KANs) supply improved interpretability and accuracy primarily based on the Kolmogorov-Arnold theorem. Current work extends KANs to arbitrary widths and depths utilizing B-splines, generally known as Spl-KAN.

Researchers from Boise State College have developed Wav-KAN, a neural community structure that enhances interpretability and efficiency through the use of wavelet features throughout the KAN framework. In contrast to conventional MLPs and Spl-KAN, Wav-KAN effectively captures high- and low-frequency knowledge elements, enhancing coaching pace, accuracy, robustness, and computational effectivity. By adapting to the information construction, Wav-KAN avoids overfitting and enhances efficiency. This work demonstrates Wav-KAN’s potential as a strong, interpretable neural community device with functions throughout numerous fields and implementations in frameworks like PyTorch and TensorFlow.

Wavelets and B-splines are key strategies for perform approximation, every with distinctive advantages and disadvantages in neural networks. B-splines supply easy, regionally managed approximations however wrestle with high-dimensional knowledge. Wavelets, excelling in multi-resolution evaluation, deal with each excessive and low-frequency knowledge, making them supreme for function extraction and environment friendly neural community architectures. Wav-KAN outperforms Spl-KAN and MLPs in coaching pace, accuracy, and robustness through the use of wavelets to seize knowledge construction with out overfitting. Wav-KAN’s parameter effectivity and lack of reliance on grid areas make it superior for advanced duties, supported by batch normalization for improved efficiency.

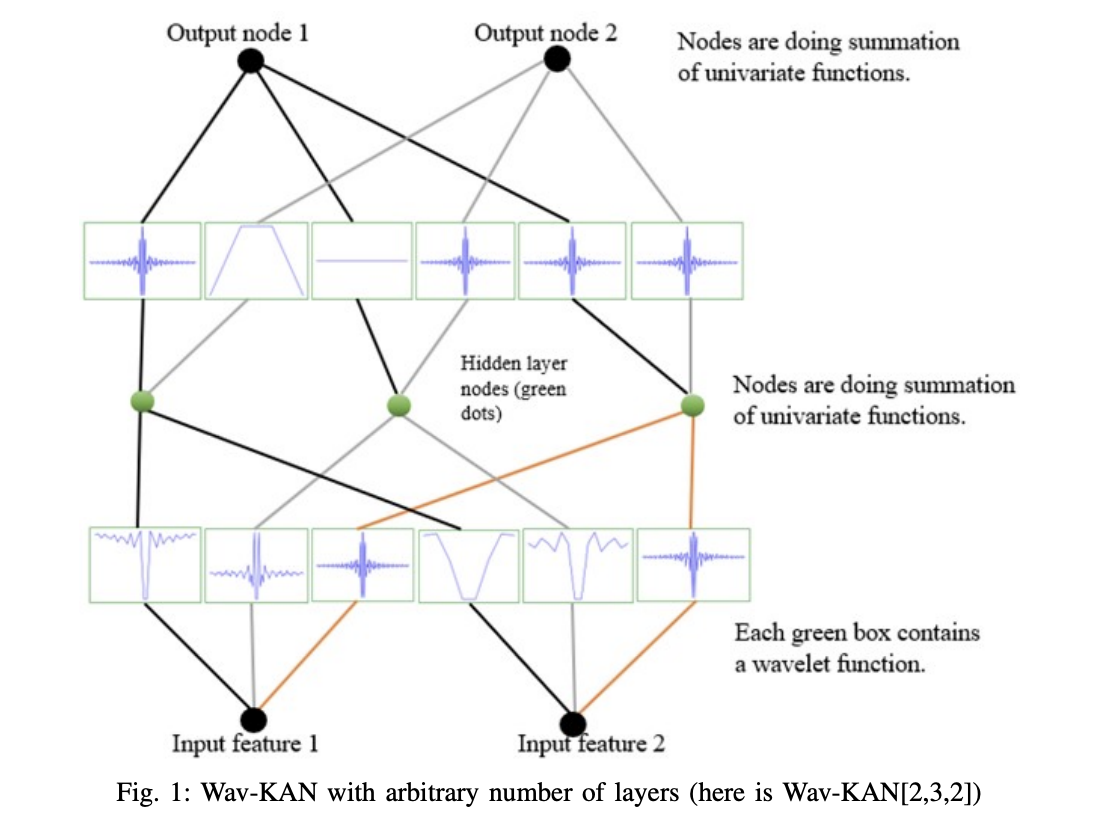

KANs are impressed by the Kolmogorov-Arnold Illustration Theorem, which states that any multivariate perform might be decomposed into the sum of univariate features of sums. In KANs, as an alternative of conventional weights and stuck activation features, every “weight” is a learnable perform. This permits KANs to rework inputs by way of adaptable features, resulting in extra exact perform approximation with fewer parameters. Throughout coaching, these features are optimized to attenuate the loss perform, enhancing the mannequin’s accuracy and interpretability by immediately studying the information relationships. KANs thus supply a versatile and environment friendly various to conventional neural networks.

Experiments with the KAN mannequin on the MNIST dataset utilizing numerous wavelet transformations confirmed promising outcomes. The research utilized 60,000 coaching and 10,000 take a look at photographs, with wavelet sorts together with Mexican hat, Morlet, By-product of Gaussian (DOG), and Shannon. Wav-KAN and Spl-KAN employed batch normalization and had a construction of [28*28,32,10] nodes. The fashions have been skilled for 50 epochs over 5 trials. Utilizing the AdamW optimizer and cross-entropy loss, outcomes indicated that wavelets like DOG and Mexican hat outperformed Spl-KAN by successfully capturing important options and sustaining robustness towards noise, emphasizing the important function of wavelet choice.

In conclusion, Wav-KAN, a brand new neural community structure, integrates wavelet features into KAN to enhance interpretability and efficiency. Wav-KAN captures advanced knowledge patterns utilizing wavelets’ multiresolution evaluation extra successfully than conventional MLPs and Spl-KANs. Experiments present that Wav-KAN achieves increased accuracy and sooner coaching speeds as a consequence of its distinctive mixture of wavelet transforms and the Kolmogorov-Arnold illustration theorem. This construction enhances parameter effectivity and mannequin interpretability, making Wav-KAN a worthwhile device for numerous functions. Future work will optimize the structure additional and broaden its implementation in machine studying frameworks like PyTorch and TensorFlow.

Take a look at the Paper. All credit score for this analysis goes to the researchers of this undertaking. Additionally, don’t overlook to observe us on Twitter. Be a part of our Telegram Channel, Discord Channel, and LinkedIn Group.

For those who like our work, you’ll love our e-newsletter..

Don’t Overlook to affix our 42k+ ML SubReddit