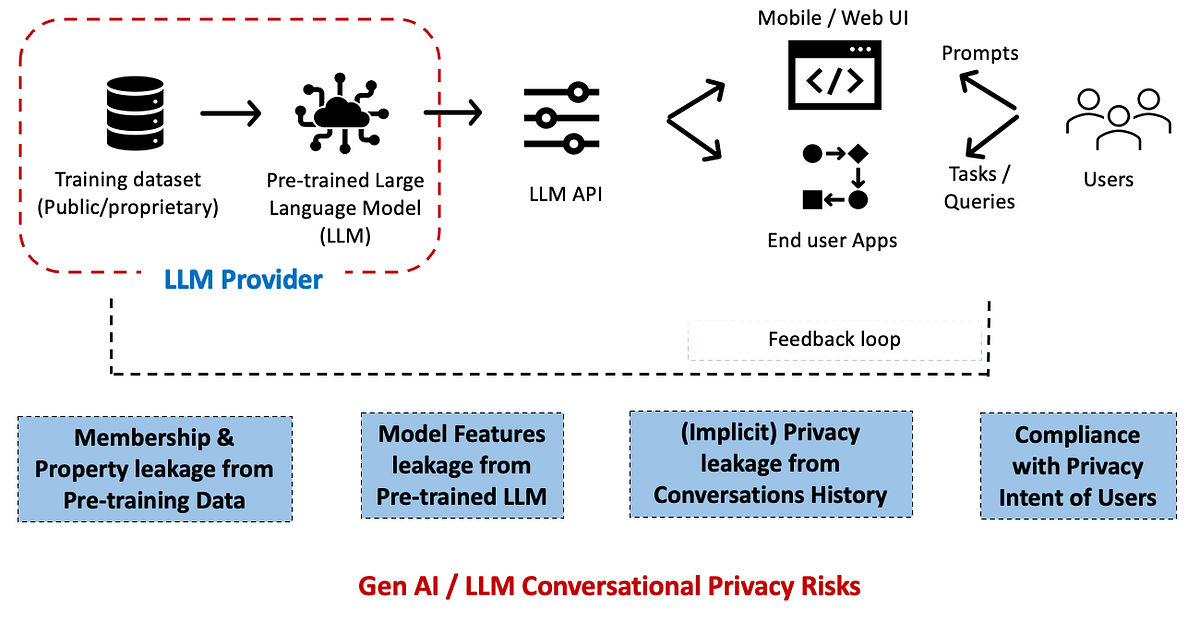

On this article, we give attention to the privateness dangers of huge language fashions (LLMs), with respect to their scaled deployment in enterprises.

We additionally see a rising (and worrisome) development the place enterprises are making use of the privateness frameworks and controls that they’d designed for his or her information science / predictive analytics pipelines — as-is to Gen AI / LLM use-cases.

That is clearly inefficient (and dangerous) and we have to adapt the enterprise privateness frameworks, checklists and tooling — to bear in mind the novel and differentiating privateness facets of LLMs.

Allow us to first take into account the privateness assault situations in a standard supervised ML context [1, 2]. This consists of the vast majority of AI/ML world at this time with largely machine studying (ML) / deep studying (DL) fashions developed with the purpose of fixing a prediction or classification activity.

There are primarily two broad classes of inference assaults: membership inference and property…

/cdn.vox-cdn.com/uploads/chorus_asset/file/25588208/Megalopolis_Adam_Driver.png)